Rémi Bardenet

Rémi Bardenet is a researcher at CNRS and University of Lille, France. His interests span across statistics, machine learning, signal processing, and applications to particle physics and cardiac biology. Since 2013, he has been trying to convince people at coffee breaks that repulsive point processes are the next generation of integration and subsampling tools. He is the PI of ERC project `Blackjack’ on “Monte Carlo integration with repulsive point processes” and of French AI chair `Baccarat’ on “Bayesian learning for expensive models”. Before joining CNRS, Rémi received his PhD from Univeristy Paris-Sud in 2012 and was a postdoc at University of Oxford in 2013-2014. See http://rbardenet.github.io/ for more details and contact information.

Rémi Bardenet is a researcher at CNRS and University of Lille, France. His interests span across statistics, machine learning, signal processing, and applications to particle physics and cardiac biology. Since 2013, he has been trying to convince people at coffee breaks that repulsive point processes are the next generation of integration and subsampling tools. He is the PI of ERC project `Blackjack’ on “Monte Carlo integration with repulsive point processes” and of French AI chair `Baccarat’ on “Bayesian learning for expensive models”. Before joining CNRS, Rémi received his PhD from Univeristy Paris-Sud in 2012 and was a postdoc at University of Oxford in 2013-2014. See http://rbardenet.github.io/ for more details and contact information.

Lucy Colwell

Lucy Colwell is a faculty member in chemistry at the University of Cambridge and a researcher in the applied science group at Google. Her primary interests are in the application of machine learning approaches to molecular design. Before moving to Cambridge Lucy received her PhD from Harvard University and was a member at the Institute for Advanced Study in Princeton, NJ. In 2018 Lucy was appointed a Simons Investigator in Mathematical Modeling of Living Systems.

Lucy Colwell is a faculty member in chemistry at the University of Cambridge and a researcher in the applied science group at Google. Her primary interests are in the application of machine learning approaches to molecular design. Before moving to Cambridge Lucy received her PhD from Harvard University and was a member at the Institute for Advanced Study in Princeton, NJ. In 2018 Lucy was appointed a Simons Investigator in Mathematical Modeling of Living Systems.

Jennifer Gillenwater

Jennifer Gillenwater is a researcher in the Modeling and Data (MAD) Science group at Google NYC. Her primary interests include differential privacy and diversification techniques for machine learning. Prior to joining Google, she completed her PhD on the topic of approximate inference for determinantal point processes at the University of Pennsylvania under the supervision of Prof. Ben Taskar, and a postdoc at the University of Washington under the supervision of Prof. Jeff Bilmes.

Jennifer Gillenwater is a researcher in the Modeling and Data (MAD) Science group at Google NYC. Her primary interests include differential privacy and diversification techniques for machine learning. Prior to joining Google, she completed her PhD on the topic of approximate inference for determinantal point processes at the University of Pennsylvania under the supervision of Prof. Ben Taskar, and a postdoc at the University of Washington under the supervision of Prof. Jeff Bilmes.

Stefanie Jegelka

Stefanie Jegelka an X-Consortium Career Development Associate Professor at MIT EECS, and a member of CSAIL, IDSS, the Center for Statistics and Machine Learning at MIT. Before that, Stefanie was a postdoc in the AMPlab and computer vision group at UC Berkeley, and a PhD student at the Max Planck Institutes in Tuebingen and at ETH Zurich.

Stefanie Jegelka an X-Consortium Career Development Associate Professor at MIT EECS, and a member of CSAIL, IDSS, the Center for Statistics and Machine Learning at MIT. Before that, Stefanie was a postdoc in the AMPlab and computer vision group at UC Berkeley, and a PhD student at the Max Planck Institutes in Tuebingen and at ETH Zurich.

Stefanie’s research is in algorithmic machine learning, and spans modeling, optimization algorithms, theory and applications. In particular, she has been working on exploiting mathematical structure for discrete and combinatorial machine learning problems, for robustness and for scaling machine learning algorithms.

Michael Mahoney

Michael W. Mahoney is at the University of California at Berkeley in the Department of Statistics and at the International Computer Science Institute (ICSI). He works on algorithmic and statistical aspects of modern large-scale data analysis. Much of his recent research has focused on large-scale machine learning, including randomized matrix algorithms and randomized numerical linear algebra, geometric network analysis tools for structure extraction in large informatics graphs, scalable implicit regularization methods, computational methods for neural network analysis, and applications in genetics, astronomy, medical imaging, social network analysis, and internet data analysis. He received his PhD from Yale University with a dissertation in computational statistical mechanics, and he has worked and taught at Yale University in the mathematics department, at Yahoo Research, and at Stanford University in the mathematics department. Among other things, he is on the national advisory committee of the Statistical and Applied Mathematical Sciences Institute (SAMSI), he was on the National Research Council’s Committee on the Analysis of Massive Data, he co-organized the Simons Institute’s fall 2013 and 2018 programs on the foundations of data science, he ran the Park City Mathematics Institute’s 2016 PCMI Summer Session on The Mathematics of Data, and he runs the biennial MMDS Workshops on Algorithms for Modern Massive Data Sets. He is currently the Director of the NSF/TRIPODS-funded FODA (Foundations of Data Analysis) Institute at UC Berkeley.

Michael W. Mahoney is at the University of California at Berkeley in the Department of Statistics and at the International Computer Science Institute (ICSI). He works on algorithmic and statistical aspects of modern large-scale data analysis. Much of his recent research has focused on large-scale machine learning, including randomized matrix algorithms and randomized numerical linear algebra, geometric network analysis tools for structure extraction in large informatics graphs, scalable implicit regularization methods, computational methods for neural network analysis, and applications in genetics, astronomy, medical imaging, social network analysis, and internet data analysis. He received his PhD from Yale University with a dissertation in computational statistical mechanics, and he has worked and taught at Yale University in the mathematics department, at Yahoo Research, and at Stanford University in the mathematics department. Among other things, he is on the national advisory committee of the Statistical and Applied Mathematical Sciences Institute (SAMSI), he was on the National Research Council’s Committee on the Analysis of Massive Data, he co-organized the Simons Institute’s fall 2013 and 2018 programs on the foundations of data science, he ran the Park City Mathematics Institute’s 2016 PCMI Summer Session on The Mathematics of Data, and he runs the biennial MMDS Workshops on Algorithms for Modern Massive Data Sets. He is currently the Director of the NSF/TRIPODS-funded FODA (Foundations of Data Analysis) Institute at UC Berkeley.

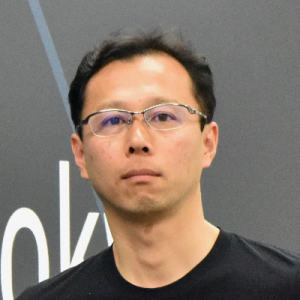

Takayuki Osogami

Takayuki Osogami is a senior technical staff member at IBM Research – Tokyo. He received his Ph.D. in computer science from Carnegie Mellon University in 2005, and a B.Eng. degree in electronic engineering from the University of Tokyo in 1998. His research focuses on the theory and applications of sequential decision making with a particular emphasis on risk-sensitive and multi-agent decision making. He is currently leading a project on integrating reinforcement learning and tree search for real-time multi-agent sequential decision making.

Takayuki Osogami is a senior technical staff member at IBM Research – Tokyo. He received his Ph.D. in computer science from Carnegie Mellon University in 2005, and a B.Eng. degree in electronic engineering from the University of Tokyo in 1998. His research focuses on the theory and applications of sequential decision making with a particular emphasis on risk-sensitive and multi-agent decision making. He is currently leading a project on integrating reinforcement learning and tree search for real-time multi-agent sequential decision making.

Yaron Singer

Yaron Singer is the Gordon McKay Professor of Computer Science and Applied Mathematics at Harvard University. He was previously a postdoctoral researcher at Google Research and obtained his PhD from UC Berkeley. He is the recipient of the NSF CAREER award, the Sloan fellowship, Facebook faculty award, Google faculty awards, 2012 Best Student Paper Award at the ACM conference on Web Search and Data Mining, the 2010 Facebook Graduate Fellowship, the 2009 Microsoft Research PhD Fellowship.